Phishing in the Age of AI: Why DMARC Is the First Line of Defense Against Next-Generation Email Threats

Quick Answer

In January 2024, a finance employee at Arup, one of the world’s largest engineering firms, joined a video call with his chief financial officer and several senior colleagues. They discussed a secret business deal requiring immediate wire transfers. The employee recognized their faces, their voices, their office backgrounds.

Related: Free DMARC Checker

Try Our Free DMARC Checker

Validate your DMARC policy, check alignment settings, and verify reporting configuration.

Check DMARC Record →In January 2024, a finance employee at Arup, one of the world’s largest engineering firms, joined a video call with his chief financial officer and several senior colleagues. They discussed a secret business deal requiring immediate wire transfers. The employee recognized their faces, their voices, their office backgrounds. Over the next several days, he initiated 15 bank transfers totaling $25 million. Every person on that video call was a deepfake (PRMIA Arup Case Study).

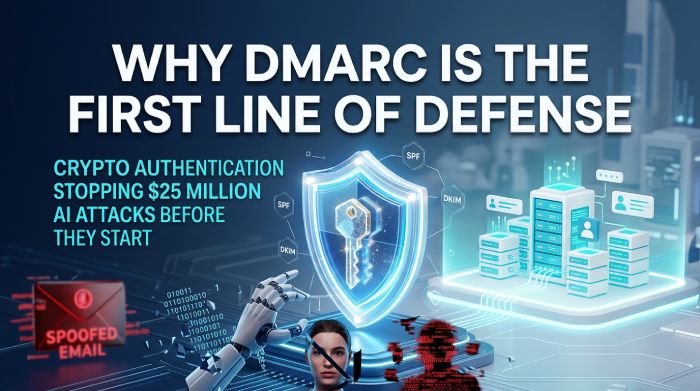

The attack began, as most BEC attacks do, with an email. An email that appeared to come from Arup’s CFO’s legitimate domain. Had Arup’s domain been enforcing DMARC at p=reject, that initial spoofed email would never have reached the employee’s inbox, and the entire $25 million attack chain would have been stopped before it started.

This is the core argument of this article: in an era where AI can generate flawless phishing text, clone any voice in seconds, and produce real-time deepfake video calls, the one thing AI cannot fake is your domain’s cryptographic authentication. DMARC enforcement, the protocol that verifies whether an email genuinely came from the domain it claims, is the first and most reliable line of defense against next-generation email threats. Not the last line. Not the only line. But the first one.

The AI Threat Landscape: What Has Changed

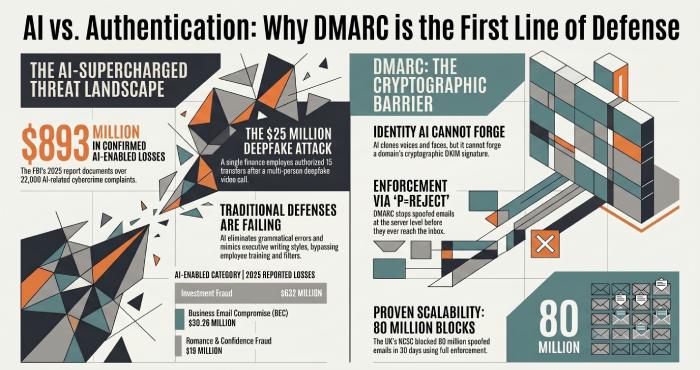

The FBI’s 2025 Internet Crime Report, released April 7, 2026, documented AI-enabled cybercrime as a distinct category for the first time in the bureau’s 25-year history. The numbers are sobering:

| AI-Enabled Cybercrime Metric | 2025 Data |

|---|---|

| Total AI-related complaints | 22,364 |

| Total AI-related losses | $893 million |

| AI-linked investment fraud losses | $632 million |

| AI-linked BEC losses | $30.26 million (confirmed AI nexus) |

| AI-linked recruitment fraud losses | $13 million (deepfake interviews) |

| AI-linked romance/confidence fraud losses | $19 million |

| Total BEC losses (all methods) | $3.046 billion |

| Total cybercrime losses | $20.877 billion |

Source: FBI IC3 2025 Annual Report (released April 7, 2026)

The FBI explicitly cautioned that these numbers are conservative: “AI-related counts are dependent upon the quality and wording of information provided by the complainant; therefore, it is possible the number could be higher.” SecureWorld’s analysis noted that AI was officially attributed to less than 8% of investment fraud losses, but the total category hit $8.6 billion, suggesting AI involvement is far broader than what victims recognize and report (SecureWorld Analysis).

$893 million in confirmed AI-enabled cybercrime losses. 22,364 complaints. And the FBI says the real number is almost certainly higher because most victims do not realize AI was involved. This is not a future threat, it is a present crisis documented in the FBI’s official data.

How AI Supercharges Every Stage of a Phishing Attack

Traditional phishing relied on volume and luck, blasting thousands of poorly written emails and hoping a few recipients would click. AI has transformed phishing into a precision weapon:

Stage 1: Reconnaissance

AI can analyze social media profiles, LinkedIn connections, corporate websites, and breached datasets to build detailed profiles of targets. The European Payments Council’s 2025 Fraud Trends Report notes that AI “enables the analysis of large datasets to identify and target victims with personalized scams, such as CEO fraud or APP fraud” (EPC 2025 Payment Threats Report).

Stage 2: Content Generation

Tools like WormGPT and FraudGPT, purpose-built AI chatbots available on dark web forums since mid-2023, can generate phishing emails that are grammatically perfect, contextually appropriate, and personalized to individual targets. An INTERPOL innovation snapshot confirmed that FraudGPT was “mainly used for malware code creation and phishing” through “the creation of emails, messages, and websites” designed to steal credentials (INTERPOL Innovation Snapshots Sept 2023). The FBI’s 2025 report states that “chat generators can quickly create official-sounding emails mimicking a company’s CEO or other officials” containing “phishing links or directions to wire funds.”

Stage 3: Voice Cloning and Deepfake Video

AI voice cloning now requires as little as three seconds of audio to produce a convincing replica. The European Parliament’s research found that 49% of companies in selected countries had experienced audio and video deepfake fraud, and one in four adults had experienced or known someone affected by an AI voice cloning scam (European Parliament, Scam Calls in Times of Generative AI). The NAIC’s 2025 Cybersecurity Insurance Market Report documented a 442% surge in vishing (voice phishing) in 2024 (NAIC 2025 Report).

The Arup case is the defining example. The $25 million loss resulted from a deepfake video call that was so convincing the targeted employee, who had initially suspected phishing, dropped his guard entirely. The FS-ISAC’s Deepfakes in the Financial Sector report provides a comprehensive taxonomy of deepfake attack scenarios already observed in the industry (FS-ISAC Deepfakes Report).

Stage 4: Scale

The FBI’s 2025 report notes that “subjects in investment scams often use AI to enhance their conversations with potential victims allowing the scammers to quickly generate thousands of conversations that appear different to each prospective victim.” AI doesn’t just make individual attacks better, it makes them infinitely scalable.

Why Traditional Phishing Defenses Are Failing Against AI

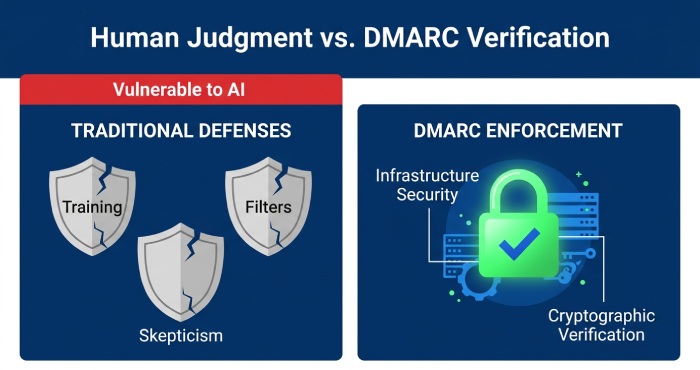

For decades, organizations relied on three primary defenses against phishing:

1. Employee training to spot suspicious emails. AI-generated text eliminates grammatical errors, awkward phrasing, and other traditional red flags. When the phishing email reads exactly like your CEO’s writing style, because AI was trained on their public communications, training alone is insufficient.

2. Email filtering and gateway security. Traditional filters look for known malware signatures, suspicious URLs, and blacklisted sender IPs. AI-crafted emails use novel text, clean URLs (often legitimate services redirected), and fresh infrastructure. The DHS assessment on AI’s impact on criminal activities confirms that AI enables attackers to “bypass language barriers and increase the reach and success rate of social engineering campaigns” (DHS AI Impact Assessment).

3. Skepticism about unexpected requests. When the follow-up call uses a cloned voice, or the video call shows a deepfake of the actual CFO in their actual office, skepticism dissolves. The Arup employee initially suspected phishing. The deepfake video call overrode that instinct.

All three defenses share a common vulnerability: they depend on human judgment at the point of receipt. DMARC enforcement works differently. It operates before the email ever reaches a human, at the infrastructure level, where cryptographic verification cannot be fooled by AI-generated content.

Why DMARC Is the First Line of Defense Against AI-Powered Phishing

DMARC enforcement addresses the one thing AI cannot fake: the cryptographic identity of your email domain. Here is why this matters:

AI can generate a perfect phishing email. But if your domain enforces DMARC at p=reject, the spoofed email from your-domain.com will be rejected by the receiving server before it ever reaches the inbox. The AI-generated content, no matter how convincing, never gets an audience.

AI can clone a voice for a follow-up call. But the attack chain almost always begins with an email. In the Arup case, the deepfake video call was triggered by an initial email from the spoofed CFO. Block that email, and the entire multi-million-dollar attack chain collapses.

AI can scale attacks to thousands of targets simultaneously. DMARC blocks every single one of those spoofed emails, regardless of volume. When the UK’s NCSC achieved 100% DMARC enforcement across central government, 80 million spoofed emails were blocked in a single 30-day period (NCSC ACD 6th Year Report). That is 80 million AI-enhanced opportunities eliminated at the infrastructure level.

AI can bypass traditional email filters. But DMARC is not a content filter. It verifies the sending domain’s cryptographic identity. No amount of AI sophistication can forge a valid SPF authorization or DKIM signature.

AI can write the perfect phishing email. AI can clone the CEO’s voice. AI can deepfake a video call. But AI cannot forge your domain’s cryptographic authentication. That is what DMARC verifies. That is why it is the first line of defense.

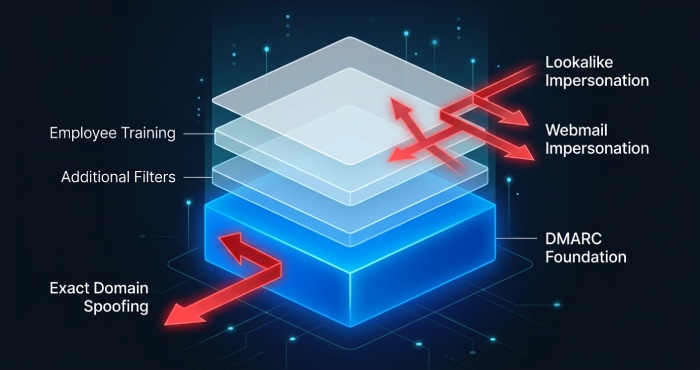

DMARC Is the First Line, Not the Only Line

It is important to be precise about what DMARC does and does not do. DMARC prevents domain spoofing, emails that appear to come from your exact domain. It does not prevent:

Lookalike domain attacks (e.g., your-d0main.com instead of your-domain.com), cousin domain attacks, or free webmail impersonation (e.g., ceo.yourcompany@gmail.com). These require additional layers: display name filtering, lookalike domain monitoring, employee training, and advanced email threat detection.

But here is the critical point: DMARC is the foundation upon which all other defenses are built. Without it, attackers do not need lookalike domains, they can use your actual domain. With it, they are forced into inferior alternatives that are easier to detect and filter. As the California Western Law Review’s analysis of AI-weaponized cybercrime notes, organizations should consider “deploying their own machine learning algorithms to identify anomalies in email headers or domain spoofing” as a core defense (California Western Law Review, AI Weaponization). DMARC is precisely that mechanism, already standardized and supported by every major mailbox provider.

What to Do Now: A Practical Action Plan for the AI Threat Era

Layer 1: DMARC Enforcement (Block Domain Spoofing)

Publish a DMARC record with RUA reporting. Identify all legitimate senders. Progress to p=reject. Use a platform like DMARC Report to visualize your aggregate reports and manage the journey. This eliminates exact-domain spoofing, the foundation of most BEC attacks.

Layer 2: AI-Aware Employee Training

Update training programs to address AI-generated threats. Employees need to understand that perfect grammar and familiar writing styles are no longer reliable indicators of legitimacy. Train specifically on out-of-band verification: any request involving money, credentials, or sensitive data must be verified through a separate channel (phone call to a known number, in-person confirmation, predetermined code word).

Layer 3: Advanced Email Security

Deploy email security solutions that use behavioral analysis and AI-powered detection, not just signature-based filtering, to identify anomalies in email patterns, sender behavior, and content. The best solutions integrate with DMARC reporting to provide a unified view of authentication and threat data.

Layer 4: Out-of-Band Verification Protocols

Establish mandatory out-of-band verification for any financial transaction, wire transfer, or sensitive data request. The PRMIA’s analysis of the Arup case explicitly recommends “overification measures that operate outside the communication channel, such as out-of-band callbacks, predetermined authentication codes or mandatory secondary approval.” This is the defense that catches what email authentication cannot: deepfake video calls, cloned voice follow-ups, and compromised accounts.

The Bottom Line

AI has not invented new types of email attacks. It has made existing attacks dramatically more effective, more scalable, and harder to detect through traditional means. Phishing emails that once had grammatical errors now read like authentic executive communications. Voice calls that once sounded robotic now use cloned voices indistinguishable from the real person. Video calls that once required a real human now deploy real-time deepfakes convincing enough to fool experienced professionals into authorizing $25 million in wire transfers.

In this environment, the defense layer that operates before the human sees the email, that verifies identity at the cryptographic level rather than relying on human judgment, becomes the most critical layer in the stack. That layer is DMARC enforcement.

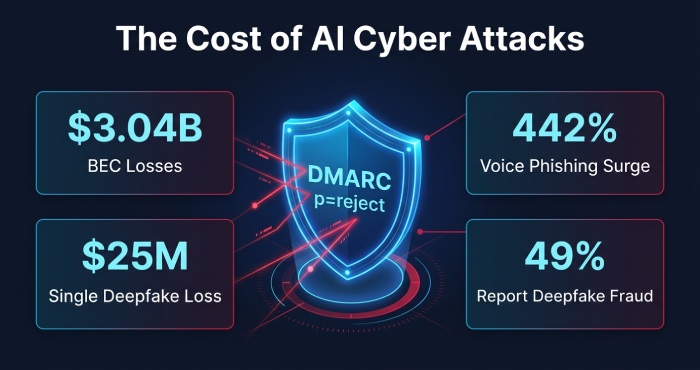

$893 million in confirmed AI-enabled cybercrime losses in 2025. $3.046 billion in BEC losses. $25 million stolen through a single deepfake video call. 442% surge in voice phishing. 49% of companies reporting deepfake fraud. 22,364 AI-related complaints to the FBI. And every one of these attack chains typically begins with a spoofed email.

DMARC enforcement stops that email. It is the first line of defense in the age of AI. And for any organization that has not yet moved to p=reject, the window to act is closing.

Sources & References

All statistics are sourced from government reports, law enforcement data, institutional research, and industry publications.

1. FBI IC3 2025 Annual Report, Source for 22,364 AI complaints, $893M AI losses, $3.046B BEC losses, $30.26M AI-linked BEC, AI crime category breakdown, and FBI quote on AI content creation.

URL: https://www.ic3.gov/AnnualReport/Reports/2025_IC3Report.pdf

2. FBI Press Release, Cryptocurrency and AI Scams, Official FBI announcement confirming AI-enabled crime statistics and “Take a Beat” guidance.

URL: https://www.fbi.gov/news/press-releases/cryptocurrency-and-ai-scams-bilk-americans-of-billions

3. PRMIA, Arup Deepfake Fraud Case Study, Detailed analysis of the $25 million Arup deepfake BEC attack including attack mechanics and defense recommendations.

URL: https://prmia.org/common/Uploaded%20files/eCyber/PRMIA%20Case%20study%20-%20ARUP.pdf

4. European Parliament, Scam Calls in Times of Generative AI, Source for 49% deepfake fraud rate, 1 in 4 adults affected by voice cloning, Netherlands vishing surge.

URL: https://www.europarl.europa.eu/RegData/etudes/ATAG/2025/777940/EPRS_ATA(2025)777940_EN.pdf

5. European Payments Council, 2025 Payment Threats and Fraud Trends Report, Source for AI-enabled fraud analysis in payments sector, CEO fraud via AI, personalized scam targeting.

6. NAIC, 2025 Cybersecurity Insurance Market Report, Source for 442% vishing surge in 2024, $2.77B BEC losses, insurance market data.

URL: https://content.naic.org/sites/default/files/inline-files/2025_Cybersecurity_Insurance%20Report.pdf

7. FS-ISAC, Deepfakes in the Financial Sector, Comprehensive deepfake threat taxonomy for financial institutions with scenario analysis and control recommendations.

8. DHS, Impact of Artificial Intelligence on Criminal and Illicit Activities, U.S. Department of Homeland Security assessment of AI threats including phishing, social engineering, and fraud.

9. INTERPOL, Innovation Snapshots (September 2023), Source for WormGPT and FraudGPT documentation as dark web AI tools for phishing and malware creation.

10. California Western Law Review, The Darwinian Effect: Weaponization of AI, Academic legal analysis of AI-powered BEC, WormGPT/FraudGPT, and defense strategies including domain authentication.

URL: https://scholarlycommons.law.cwsl.edu/cgi/viewcontent.cgi?article=1780&context=cwlr

11. UK NCSC Active Cyber Defence 6th Year Report, Source for 100% UK central government DMARC enforcement and 80 million blocked spoofed emails in 30 days.

URL: https://www.ncsc.gov.uk/files/acd6-summary.pdf

12. SecureWorld, AI-Enabled Fraud Analysis, Analysis of FBI IC3 2025 data including AI attribution gaps and conservative baseline estimates.

URL: https://www.secureworld.io/industry-news/ai-enabled-fraud-topped-893m-fbi

13. Fisher Phillips, Deepfake Scammers Steal $25 Million, Legal analysis of the Arup deepfake BEC case with five practical defense recommendations.

14. Falade (2023), AI-Powered Social Engineering, Academic analysis of WormGPT, FraudGPT, and generative AI social engineering attack vectors.

Topics

General Manager

Founder and General Manager of DuoCircle. Product strategy and commercial lead for DMARC Report's 2,000+ customer base.

LinkedIn Profile →Take control of your DMARC reports

Turn raw XML into actionable dashboards. Start free - no credit card required.