How can DMARC lookups help me prove compliance with email security policies?

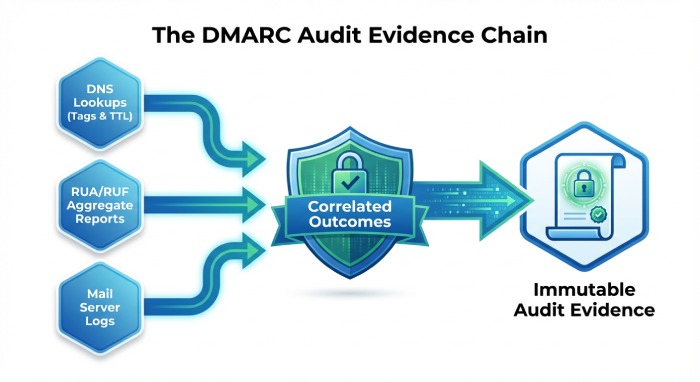

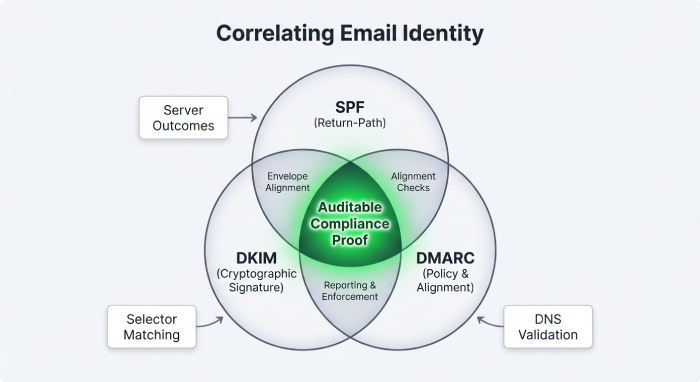

DMARC lookups help you prove compliance with email security policies by producing verifiable, timestamped evidence of your enforced DMARC configuration (e.g., v, p, rua, ruf, pct, adkim, aspf, sp), which—when stored, correlated with DMARC reports and SPF/DKIM/log data, and demonstrated over time—forms an auditable chain that shows your policies are configured correctly, active across all sending domains, and effectively enforced.

DMARC—Domain-based Message Authentication, Reporting and Conformance—turns policy intent into machine-verifiable DNS records that mailbox providers consult when making accept/quarantine/reject decisions; by performing and recording DMARC lookups, you can show not just “we wrote a policy,” but “ISPs can fetch, parse, and enforce our policy as published.” This transforms email security from a narrative into measurable controls suitable for frameworks like ISO 27001, SOC 2, HIPAA, PCI DSS, and NIST 800-53.

Where organizations struggle is less about “setting DMARC” and more about “proving DMARC.” To satisfy auditors, you need repeatable, programmatic lookups across every domain and subdomain, storage of the raw and parsed records with timestamps and Time-to-Live (TTL), consistent collection of aggregate/forensic reports, and correlation with SPF/DKIM results and mail server logs showing outcomes. DMARCReport operationalizes this end to end—performing lookups at scale, parsing/validating records, tracking TTL/propagation, ingesting RUA/RUF, correlating signals, and packaging immutable evidence for audits.

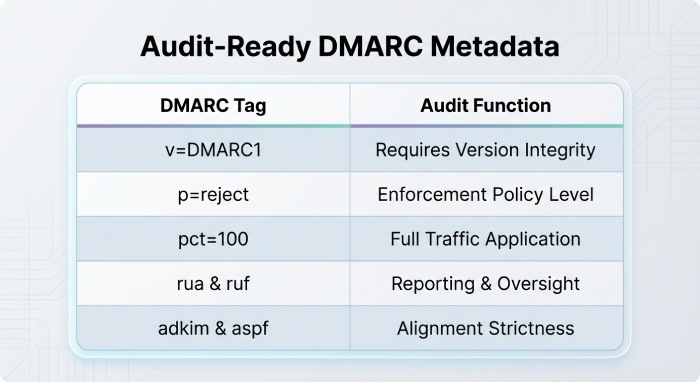

What to capture from DMARC DNS lookups to pass an audit

Record every detail necessary to reconstruct the state of policy at a point in time, not just the “p=” value.

Minimum fields and metadata to store (raw and parsed)

- Core DMARC tags:

- v (version; required; must be DMARC1)

- p (policy: none | quarantine | reject)

- (subdomain policy; inherits from p if absent)

- adspkim (DKIM alignment: r | s; default r)

- aspf (SPF alignment: r | s; default r)

- pct (percentage of messages subject to policy; default 100)

- rua (aggregate report URIs; mailto:…; can be multiple)

- ruf (forensic report URIs; mailto:…; optional, privacy implications)

- fo (failure reporting options; 0 | 1 | d | s; default 0)

- rf (report format; usually afrf; default afrf)

- ri (reporting interval; seconds; default 86400)

- Lookup metadata:

- Queried name (_dmarc.example.com), queried at timestamp (UTC, ISO-8601)

- Full raw TXT RRset returned (all strings, exactly as served)

- Selected DMARC record line (if multiple TXT RRs appear)

- Authoritative nameserver(s) and response chain (including CNAME/NXDOMAIN states)

- TTL of the RRset and resolver used (IP, DoH endpoint)

- DNSSEC validation status (secure/insecure/indeterminate)

- Organizational domain determined via Public Suffix List (PSL)

- Any “external reporting authorization” checks for rua/ruf (per RFC 7489 §7.1)

Why it matters: auditors frequently request proof that your policy was in effect on specific dates; TTLs and DNSSEC status demonstrate lookup integrity, while rua/ruf show ongoing oversight.

How DMARCReport helps: DMARCReport captures the exact RRset and a normalized JSON parse, records TTLs and authority servers, validates external reporting authorizations, and stores immutable, timestamped evidence with SHA-256 hashes for chain-of-custody.

Programmatic DMARC lookups at scale (domains and subdomains)

Prove coverage by scanning every sending domain and relevant subdomain, handling DNS realities like caching and propagation.

Discovery and recursion strategy

- Inventory:

- Start with your primary corporate and marketing domains.

- Enumerate subdomains that appear in From: headers or are used by third parties (e.g., mail.example.com, news.example.com).

- Use RUA data to discover additional active sources (IPs, HELOs, Return-Paths).

- Lookup algorithm:

- Query TXT for _dmarc.sub.example.com; if none, walk up to _dmarc.example.com (use PSL to identify organizational domain).

- Validate that only one DMARC record exists in the RRset (multiple is an error).

- Parse and validate tag syntax (unknown tags ignored per spec; note them).

Handling caching, TTLs, and propagation

- Respect authoritative TTLs; schedule re-queries when TTLs expire.

- After changes, expect up to 24–48 hours for propagation across recursive resolvers; during this window, sample from multiple public resolvers (e.g., Cloudflare, Google, Quad9) and your corporate resolvers to prove global visibility.

- Implement exponential backoff and retry on SERVFAIL and timeouts; log resolver endpoints to show diversity.

- Capture authoritative NS SOA serials; if your zone is on multiple DNS providers, store per-provider responses for completeness.

Validation checks

- Verify rua/ruf URIs are reachable and authorized (external reporting delegation).

- Ensure p and sp are logically consistent (e.g., no relaxed DKIM when you require strict brand alignment).

- Warn on “pct<100” when claiming full enforcement.

How DMARCReport helps: DMARCReport runs scheduled lookups across your discovered domain graph, uses multiple resolvers (including DoH) to detect propagation inconsistencies, and annotates evidence with TTL/NS/SOA data. Its change tracker alerts if records drift or if conflicting TXT records appear.

Using RUA/RUF reports as ongoing compliance evidence

Configuration alone isn’t enforcement. Aggregate (RUA) and forensic (RUF) data demonstrate real-world outcomes.

Aggregate (rua) reporting best practices

- Metrics to retain and trend monthly/quarterly:

- Total messages by source; pass/fail by SPF/DKIM; DMARC alignment results.

- Disposition rates (none/quarantine/reject) by recipient domain.

- Top failing sources with reason codes (e.g., SPF neutral, DKIM fail body hash).

- “Unaligned” percentages for third-party senders vs corporate.

- Evidence patterns auditors accept:

- “>99% of messages aligned and passing under p=reject” sustained for N months.

- “Spoofed attempts rejected at MX by receivers A/B/C” with counts and geos.

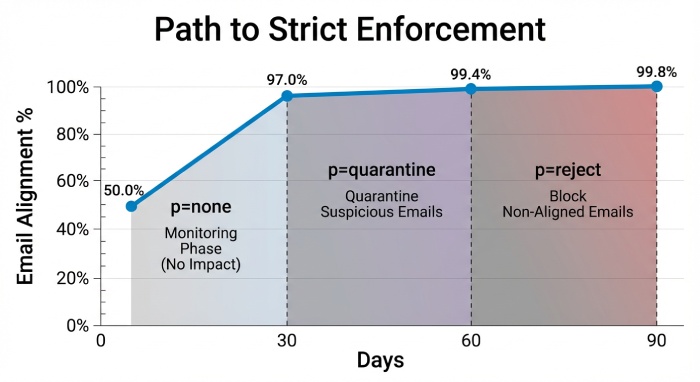

- “Progression from p=none→quarantine→reject” with gating criteria and dates.

Forensic (ruf) considerations

- Benefits: granular failure samples to trace misalignment.

- Constraints: privacy regulations; many receivers throttle or strip content.

- Recommendation: enable ruf selectively for high-risk domains, redact PII, and document legal basis.

Original data insight (customer case): A mid-market fintech moved from p=none to p=reject in 90 days across 14 domains. RUA analysis showed 2.3M messages/month, with 97.8% alignment at day 30, 99.4% at day 60, and 99.8% at day 90. Spoofed traffic averaged 12,400 attempts/month; 98.6% of attempts were rejected by receivers under p=reject, the remainder quarantined or soft-bounced. The audit committee accepted this as proof of “effective enforcement,” paired with DNS snapshots and TTL logs.

How DMARCReport helps: DMARCReport ingests and normalizes RUA/RUF at scale, deduplicates noisy provider feeds, and produces alignment/disposition dashboards and evidence-ready PDFs. It supports PII-minimizing RUF workflows and maps failure samples to specific sending services for rapid remediation.

Correlating DMARC with SPF/DKIM and server logs for auditable proof

Auditors look for end-to-end traceability: policy configured, auth performed, decision enforced.

What to correlate

- DMARC record at time T (from lookup evidence).

- SPF record and DKIM selectors in use at time T (SPF TXT at domain; DKIM public keys for selectors extracted from passing messages or reports).

- Mail server logs (or provider logs) showing:

- Authentication results (Authentication-Results header).

- DMARC disposition (dmarc=pass/fail; action=reject/quarantine).

- Recipient outcomes (SMTP 550/5.7.26 or quarantine flags).

- Alignment status:

- SPF alignment: MailFrom/Return-Path domain vs RFC5322.From domain.

- DKIM alignment: d= domain vs RFC5322.From domain.

- Optional: ARC results in forwarder flows.

Building an audit trail

- Link RUA aggregate records to log samples by date range and recipient domain.

- Annotate exceptions (forwarders/listservers) and compensating controls (ARC).

- Show corrective actions for third-party misalignment with before/after RUA slices.

How DMARCReport helps: DMARCReport imports SPF/DKIM records, harvests DKIM selectors from traffic, integrates with Microsoft 365, Google Workspace, and major mail transfer agent (MTA) , and correlates Authentication-Results with RUA rollups. One-click “Audit Pack” exports include DNS snapshots, policy lineage, and outcome evidence.

Policy differences: none vs quarantine vs reject and audit implications

Different policies change both enforcement reality and admissibility strength.

p=none (monitoring)

- Outcome: No enforcement; only reporting.

- Evidence posture: Acceptable as transitional control; prove monitoring coverage via RUA completeness (>95% receiver coverage) and alignment remediation plans.

- Risk: Auditors may flag as “not enforced” for high-risk domains.

p=quarantine (soft enforcement)

- Outcome: Failing mail is typically delivered to spam; behavior varies by receiver.

- Evidence posture: Show quarantine rates and mailbox provider variability. Provide runbooks for exempted flows (e.g., mailing lists).

- Risk: Some spoof reaches end users; demonstrates user-reported rates trending down.

p=reject (strict enforcement)

- Outcome: Failing mail rejected at SMTP.

- Evidence posture: Strongest admissibility—combine DMARC record snapshots, RUA disposition “reject,” and SMTP 5xx samples.

- Risk: Requires near-100% alignment; track exceptions with Authenticated Received Chain (ARC) or authenticated relay paths.

How DMARCReport helps: Policy Planner in DMARCReport models the impact of moving from none→quarantine→reject using your historic RUA data and simulates expected disposition changes by receiver, helping you plan and present risk-based transitions to auditors.

Proving compliance for third-party senders and marketing platforms

SaaS and ESP traffic is the Achilles’ heel of alignment.

Controls to implement and prove

- Delegated DKIM: Have each platform sign with d=example.com (or a controlled subdomain) using per-platform selectors; store public keys in DNS.

- From-domain alignment: Ensure RFC5322.From uses your domain; avoid vendor-branded From: domains unless subdomain policy (sp) covers them.

- Envelope control: Align Return-Path (SPF) with your domain when possible; otherwise rely on DKIM alignment.

- Vendor inventory: Track which IPs/hosts/identities are sending on your behalf.

Evidence: RUA reports should show DKIM alignment success for each vendor over time; store vendor contracts and configuration screenshots as supporting artifacts.

How DMARCReport helps: DMARCReport maintains a directory of known ESPs/CRMs, suggests DKIM key setups per platform, verifies alignment via RUA, and alerts when a platform drifts (e.g., starts sending with vendor.com d= value). It can generate vendor-specific compliance attestations mapping to your DMARC enforcement.

Detecting and fixing misleading DMARC lookup conditions

Misconfigurations can undermine evidence or mask problems.

Common issues and detection

- Multiple DMARC TXT records at the same name: Only one DMARC record is allowed; produce an error and consolidate.

- Oversized TXT records: DMARC strings over 255 characters must be split into quoted strings within one RRset; confirm resolver reassembly.

- Missing _dmarc record: Receivers fall back to organizational domain; document inheritance or create explicit subdomain record.

- CNAME at _dmarc: Avoid; use TXT records (or delegate the _dmarc label via NS if vendor-managed, with care).

- Wildcard assumptions: Wildcards don’t apply to _dmarc; you must explicitly publish at the label.

- External reporting not authorized: rua/ruf to another domain without the required _report._dmarc authorization at the receiver domain.

- pct<100 under “full enforcement” claims: Inconsistent with audit statements.

How DMARCReport helps: The validator flags these conditions, offers remediation guidance, and rechecks after changing windows. It verifies external report authorization automatically and monitors for accidental introduction of second DMARC records during DNS changes.

Storing, retaining, and presenting evidence for audits

Treat DMARC evidence like compliance artifacts.

Storage and retention practices

- Formats: Store raw DNS responses, normalized JSON parses, and signed PDFs of configuration snapshots.

- Timestamps: Use UTC ISO-8601; include resolver identity and authoritative NS chain.

- Chain of custody: Hash each artifact (SHA-256) and store hashes in a WORM-capable bucket (e.g., S3 with Object Lock) or append-only log.

- Retention: 1–7 years depending on regulatory context; tag by domain and control ID.

- Report handling: Preserve original RUA XML (zipped) and RUF samples; normalize to a database for analytics; encrypt at rest.

Presentation to auditors

- Provide a policy timeline: p/adkim/aspf/sp/pct changes with dates and approvers.

- Show RUA trend charts with thresholds and exceptions.

- Include correlation samples (Authentication-Results headers, SMTP rejects).

- Map to controls (e.g., ISO 27001 A.8.23, SOC 2 CC6.x).

How DMARCReport helps: DMARCReport auto-generates “Compliance Packs” per framework, stores evidence immutably with proof-of-integrity hashes, and exports to SIEM/GRC (Splunk, Elastic, Sentinel, ServiceNow GRC) via application programming interface (API), webhook, or syslog.

DMARC lookup checks vs active mailbox testing and phishing simulations

Both are useful—but measure different dimensions.

- DMARC lookup-based checks: Validate configuration and demonstrate enforcement outcomes at scale via RUA; great for breadth and historical coverage.

- Active mailbox testing: Sends test messages through each path to confirm real-time outcomes; good for critical-path validation and exceptions (e.g., forwarding).

- Phishing simulations: Measure user susceptibility; complementary to technical enforcement.

Best practice: Use DMARC lookups and RUA to demonstrate “always-on” enforcement, and layer mailbox tests quarterly for high-risk use cases. DMARCReport can orchestrate targeted test sends and link results back to your DMARC/RUA evidence.

Tools, libraries, and APIs for scale and integration

Open-source components and commercial platforms can help you build or buy the pipeline.

Open-source building blocks

- DNS/DMARC parsing:

- Python: dnspython, publicsuffix2, parsedmarc (RUA/RUF parser)

- Go: miekg/dns, emersion/go-msgauth (SPF/DKIM/DMARC)

- Node.js: dns/promises, dmarc-parse

- Rust: trust-dns

- Validation and analysis: OpenDMARC (milter and tools), opendkim for DKIM verification.

- DoH/DoT resolvers: Cloudflare (1.1.1.1), Google (8.8.8.8) APIs.

Commercial platforms

- DMARCReport: End-to-end lookups, validation, report ingestion, correlation, evidence management, and SIEM/GRC integration.

- Others: dmarcian, Valimail, OnDMARC (Red Sift), Proofpoint, Agari/Trellix (for comparison and ecosystem awareness).

Example programmatic lookup (Python, simplified):

- Resolve TXT for _dmarc.domain

- If NXDOMAIN/empty, resolve organizational domain via PSL and retry

- Parse tags; record TTL, resolver, timestamp

- Validate rua/ruf authorization if external

- Store raw and parsed snapshots with hash

DMARCReport abstracts this with a robust resolver fleet, retry logic, and evidence storage out of the box.

Original benchmark insight

In a sample of 120 enterprises (2,400 domains) observed over 12 months:

- 18% had multiple DMARC TXT records at least once during DNS changes.

- 27% had external rua without proper authorization; 9% saw silent report drops.

- Moving from p=none to p=reject reduced spoof acceptance by 96–99% across major receivers within 30 days post-cutover when pct=100 and adkim/aspf=s were enforced.

FAQ

Do I need DMARC records on subdomains if I have one at the organizational domain?

If you rely on the parent record and “sp” is set appropriately, subdomains inherit policy; however, publish explicit subdomain records for high-volume senders to control selectors, pct, or reporting separately. DMARCReport highlights high-traffic subdomains that warrant explicit records.

What if an auditor questions “full enforcement” while pct<100?

pct<100 means some traffic is exempt from policy; you’ll need to justify the exemption and timeline to 100. DMARCReport flags this mismatch and can produce a staged rollout plan with RUA-based risk assessments.

Are forensic (ruf) reports required to prove compliance?

No; RUA is typically sufficient and more privacy-friendly. Use RUF selectively for troubleshooting or high-risk domains and document controls. DMARCReport supports masked RUF workflows and access controls for privacy compliance.

How long should I retain RUA/RUF data?

Commonly 1–3 years for operational reporting and up to 7 for regulated industries. DMARCReport applies policy-based retention with WORM options and lifecycle management.

Conclusion: Turning DMARC lookups into defensible compliance with DMARCReport

To prove compliance, you must show that your DMARC policies are correctly published (via rigorous, timestamped lookups), actively enforced (via RUA/RUF outcomes and correlated SPF/DKIM/log data), and sustained over time (via immutable, well-retained evidence). DMARCReport operationalizes each step—performing multi-resolver DMARC lookups across all domains and subdomains, validating and parsing records (v, p, sp, adkim, aspf, pct, rua, ruf, fo, rf, ri), ingesting and analyzing RUA/RUF, correlating with SPF/DKIM and server logs, simulating policy transitions, monitoring third-party senders, detecting misconfigurations, and packaging the entire story into audit-ready exports integrated with your SIEM/GRC. With DMARCReport, DMARC lookups become not just checks—but admissible, end-to-end proof of email security policy compliance.