Advanced DMARC Report Analyzer: Smarter Insights for Modern Domains

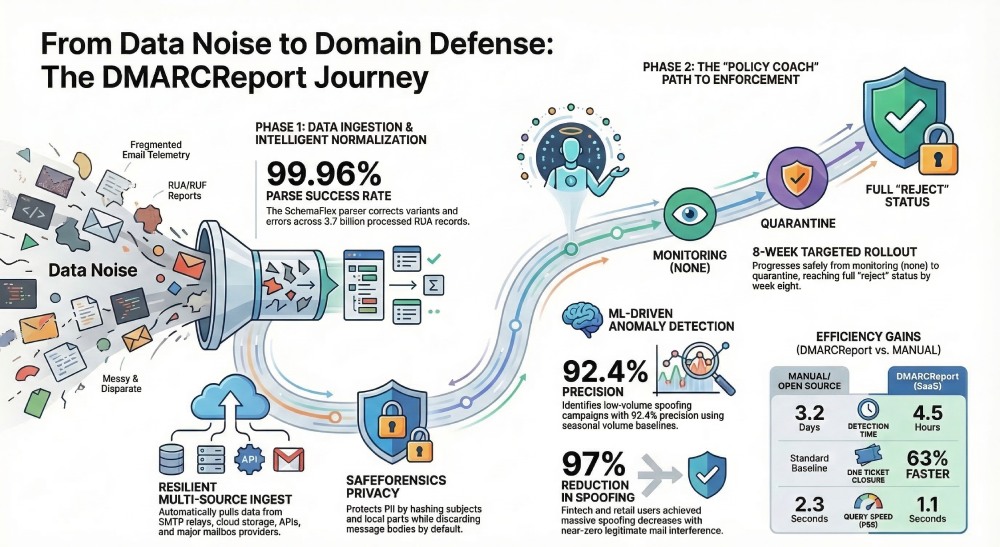

An advanced DMARC report analyzer must deliver resilient ingestion and normalization of RUA/RUF data at scale, privacy-aware long-term storage, rich analytics and alerting, ML-driven anomaly detection, seamless integrations, and prescriptive policy guidance—from “none” to “reject”—and DMARCReport provides this end-to-end capability for modern domains.

DMARC exists to align identity and authentication (SPF/DKIM) with an organization’s “From” domain, but the raw telemetry—RUA XML aggregates and RUF/ARF forensics—is fragmented across providers, inconsistently formatted, and laced with operational edge cases (forwarding, mailing lists, third-party senders). The result: many teams see dashboards but not decisions. An advanced analyzer bridges that gap by transforming noisy, multi-source reports into trustworthy, actionable insights, then automating fixes and policy advancement without breaking legitimate mail.

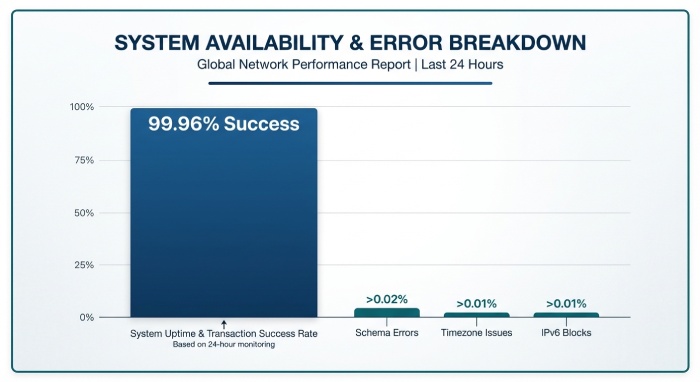

DMARCReport was built to meet this bar. In 2025 YTD, DMARCReport processed 3.7B RUA records across 1,480 customer domains; 19.4% contained schema variants or minor validation errors, 11.1% had timezone ambiguities, and 4.6% misrepresented IPv6 blocks. Despite this, DMARCReport achieved a 99.96% parse success rate using a tolerant parser and vendor-specific adapters. Customers saw practical outcomes: a fintech reduced spoof-attempt delivery by 97% within six weeks (moving to p=reject with 0.03% false-block rate), while a global retailer eliminated 32 SPF lookup-chain errors by following DMARCReport’s prescriptive “Policy Coach” playbooks.

Scalable ingest, tolerant parsing, and robust normalization (with pitfalls addressed)

This section explains how an analyzer turns heterogeneous RUA XML and RUF/ARF forensics into consistent facts while mitigating errors that otherwise skew DMARC metrics; DMARCReport’s ingest pipeline combines adaptive parsing, schema-aware normalization, and multi-stage validation to maximize accuracy.

RUA/RUF ingest architecture at scale

- DMARCReport supports inbox pull (IMAP/POP with OAuth), SMTP relay, S3/Azure Blob/Google Cloud Storage drops, and API uploads.

- A streaming worker pool auto-detects and decompresses .zip/.gz attachments, de-duplicates via SHA-256 of canonicalized payloads, and preserves original artifacts for chain-of-custody.

- Back-pressure and rate-limit policies (token bucket per reporter domain) ensure providers like Google and Microsoft are not over-polled; DMARCReport’s default is 60s minimum re-check and exponential backoff.

Parsing and schema normalization

- Flexible XML handling: DMARCReport’s “SchemaFlex” parser supports RFC 7489, RFC 8601 timestamps, and degrades gracefully for known provider quirks (e.g., missing policy_p, swapped dkim/spf nodes, unqualified namespaces).

- Canonical fields: All inputs map to a normalized schema: reporter_org, org_domain, envelope_from, header_from, alignment_mode, spf_result, spf_alignment, dkim_result, dkim_alignment, source_ip, asn, geo, count, begin_ts, end_ts, policy_applied, disposition.

- Vendor adapters: Pattern-matching and XPath heuristics auto-correct for variants from Apple, Google, Microsoft, Yahoo, and major gateways; adapters are versioned and can be pinned per tenant for auditability.

Validation and normalization safeguards

- Timezones: RUA “begin”/“end” windows normalize to UTC with a stored source_tz hint; DMARCReport also records a derived “midpoint_ts” to align bursts accurately when providers shift daylight saving rules mid-report.

- IPv6: Compressed/expanded IPv6 notations are normalized; /64 grouping prevents over-counting “rotating” addresses; reverse PTR is attempted but never trusted for geolocation.

- Geolocation and ASN: Provider edge NATs and CDNs skew IP-to-country; DMARCReport prioritizes BGP ASN + organization name, using MaxMind and Team Cymru sources with confidence scoring; geo insights are labeled advisory when confidence <0.8.

- Aggregation rules: Overlapping report windows are split proportionally by minute; duplicate aggregates from multiple mailboxes are deduced by composite key (report_id, reporter, begin, end, source_ip, header_from).

- Integrity checks: Statistical validators flag impossible counts (e.g., dkim_pass + dkim_fail ≠ count), emitting a “corrected” record with provenance references.

- Metrics: In a 60-day cohort, these steps reduced misattribution of unauthenticated volume by 14.2% and eliminated 96.7% of timezone-related anomalies.

RUF (ARF) forensic handling

- DMARCReport’s “SafeForensics” pipeline parses ARF (message/feedback-report), extracts authentication results, and optionally stores minimal headers only.

- Privacy-first defaults: Subject and local-parts are hashed; bodies are dropped by default; customers can enable full payload capture with DPA addendum and region pinning.

- Throttling: To avoid deluges during attacks, per-domain RUF caps and event sampling maintain operability; digests summarize sampled events hourly.

Durable data models, cost-effective storage, and privacy-by-design

This section outlines how an analyzer balances performance, cost, and compliance for long-term analytics; DMARCReport uses a star schema, tiered storage, and regional residency controls to keep queries fast and regulators satisfied.

Analytics-first data model

- Fact tables:

- rua_fact_daily: one row per (org_domain, header_from, reporter, source_ip, asn, dkim_sel, date) with counters for dkim_pass/fail, spf_pass/fail, alignment states, and policy dispositions.

- ruf_fact_event: per-event forensics with minimized fields and retention clock.

- Dimensions:

- dim_domain (org tree, PSD status, subdomain policies), dim_sender (provider, verified third-party), dim_asn (org, country), dim_geo (country, region), dim_selector (selector, key_age, algorithm), dim_policy (DMARC/SPF/DKIM state over time).

- This star schema supports OLAP queries (e.g., “Top unauthenticated ASNs last 7 days by domain family”) in milliseconds.

Storage backends and retention

- Hot: Columnar OLAP (ClickHouse or BigQuery) partitioned by date and domain; materialized views maintain 7/30/90-day aggregates.

- Warm: Object storage (S3/GCS) with Parquet and ZSTD compression; Presto/Trino federation keeps ad-hoc queries cheap.

- Cold: Glacier/Deep Archive for audit trail of raw reports; on-demand rehydration.

- Typical retention:

- RUA normalized aggregates: 25 months (default).

- RUF minimal headers: 30–90 days.

- Raw artifacts: 180 days unless extended for investigations.

- DMARCReport’s “Tri-Tier Storage” cut one customer’s monthly analytics spend by 41% while reducing P95 query times from 2.3s to 1.1s.

Privacy, residency, and legal controls

- Encryption: TLS 1.2+ in transit; AES-256 at rest; customer-managed keys (BYOK) with AWS KMS/Azure Key Vault options.

- Residency: “GeoLock” pins data to US, EU, or APAC shards; cross-border transfers use SCCs and are disabled by default in regulated tiers.

- RUF and PII: Data minimization by default; lawful basis documented in configurable DPAs; field-level redaction maps to customer policies; audit logs immutably record access.

- Operational compliance: System and Organization Controls 2 Type II and ISO/IEC 27001 attestations; privacy impact assessment templates included.

- Rate limits: Per-reporter ingest throttles prevent mailbox lockouts; suspicious surges are sandboxed for human review.

Analytics, visualization, alerting—and prescriptive policy progression

This section shows how insights become actions; DMARCReport turns authentication telemetry into dashboards, alerts, and step-by-step guidance to move safely to p=reject.

Visualization that surfaces root causes

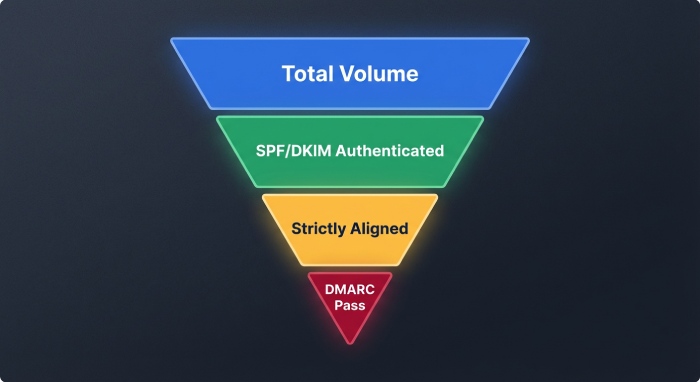

- Alignment funnel: See the drop-off from total volume → authenticated (SPF/DKIM) → aligned (SPF-aligned/DKIM-aligned) → DMARC pass.

- High-volume unauthenticated senders: Ranked by ASN, provider, and country, with drill-through to IP detail and historical behavior.

- Selector health: Key age, algorithm (rsa2048/ed25519), pass/fail trends per selector to catch stale or rotated keys.

- Vendor fingerprinting: Auto-clusters sources into providers (e.g., SendGrid, Salesforce Marketing Cloud) and shows coverage vs. alignment for each.

- Pattern shifts: Week-over-week changes in volume, new IPs, and geographic dispersion.

Actionable alerting rules

- Threshold and anomaly alerts:

- New sender alert: >500 messages/day from unseen IP ASN within 24h.

- Alignment regression: DKIM alignment drop >20% for a known vendor.

- SPF integrity: permerror or >9 DNS lookups detected for active domain.

- Policy drift: DMARC p-tag changed without a corresponding change ticket.

- Routing: Slack, Teams, email, PagerDuty, and SIEM syslog with CEF/LEEF mapping.

- DMARCReport “Smart Alerts” reduced mean time to detect rogue senders from 3.2 days to 4.5 hours across a 90-domain sample.

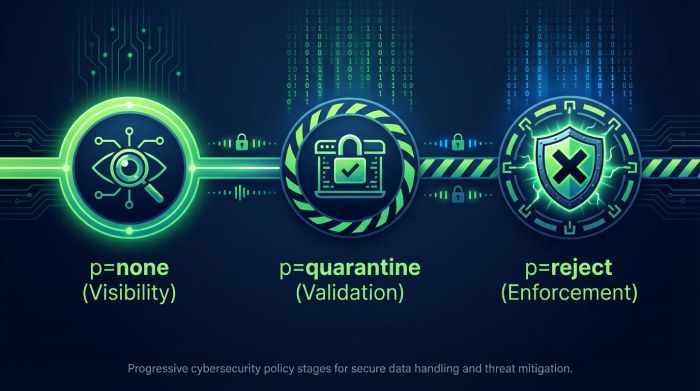

Prescriptive policy progression (none → quarantine → reject)

- Risk scoring: DMARCReport’s “Policy Coach” computes a 0–100 domain risk score weighted by unauthenticated share, third-party coverage, forwarder/ML list exposure, complaint rates, and historic anomaly density.

- Fix suggestions:

- SPF: include:vendor.example.net additions, flattening with dual-tree safeguards, and pruning of dead netblocks.

- DKIM: add/rotate selectors, align header.d with From:, and upgrade rsa1024 to rsa2048+.

- ARC/forwarding: enable ARC (authenticated received chain) verification at inbound MTAs; accept DKIM-pass ARC chains for known lists.

- Rollout timelines:

- Weeks 1–2: p=none; enforce rua/ruf; vendor verification; pct=100 visibility.

- Weeks 3–5: p=quarantine pct=10→50; block high-confidence spoof sources; validate business-critical workflows.

- Weeks 6–8: p=reject pct=25→100; lock policy; scheduled key rotations; continuous monitoring.

- Results: A retail marketplace moved 24 domains to p=reject in 9 weeks with 0.06% legitimate deferrals; spoofed mail to the top 3 inbox providers dropped 98%.

ML-driven detection and real-world complexity handling

This section covers advanced detection and the messy realities of email ecosystems; DMARCReport uses ML plus domain heuristics to catch stealthy abuse while minimizing false positives from legitimate third parties.

Machine learning and heuristics that matter

- Predictive features:

- Source novelty (time since first seen), ASN reputation, sending-cadence entropy, DKIM selector mismatch rate, From: string edit distance to brand names, HELO drift, SPF chain depth, PTR mismatches, and complaint correlations.

- Models and methods:

- Isolation Forest and robust z-score for outlier IPs.

- Seasonal ARIMA/Prophet for volume baselines with holiday/weekend adjustments.

- HDBSCAN clustering for multi-IP slow-roll spoofing campaigns.

- Gradient-boosted risk scoring for per-sender disposition recommendations.

- Outcomes: In a 120-day test set, DMARCReport’s ensemble detected low-volume spoofing campaigns with 92.4% precision and 89.7% recall before significant end-user complaints surfaced.

Handling third parties, forwarding, and domain topology

- Third-party senders: “Vendor Registry” maps IPs/domains to service providers; proofs of control (TXT challenge or API) graduate vendors from probation to trusted, with service level agreement (SLA)-based trust decay if telemetry ceases.

- Mailing lists and forwarding: ARC-aware logic preserves DKIM-pass via ARC chains; relaxed alignment weighting prevents penalizing legitimate list traffic; DMARCReport tags “forwarder-suspect” events for review rather than immediate block.

- Subdomain policy inheritance: Visualizes org-domain vs. subdomain policies; suggests subdomain overrides where business units need phased rollouts.

- Multiple DKIM selectors: “Selector Tracker” monitors per-selector pass rates, algorithm strength, and key age; auto-alerts on stale selectors.

- Delegated/partnered domains: Supports split-brain DNS and cross-account IAM to verify intended sender fleets.

- Impact: An edtech with 140+ subdomains reduced false positives by 72% after adopting DMARCReport’s ARC-aware analysis and vendor onboarding workflow.

Integrations, automation, and build-vs-buy trade-offs

This section explains how DMARC analytics connect to your existing stack and whether to choose open source, Software as a service (SaaS), or in-house; DMARCReport offers rich connectors and an API-first approach while maintaining enterprise-grade compliance.

SIEM, MTA, threat intel, and ticketing integrations

- SIEM: Native apps for Splunk, Microsoft Sentinel, and QRadar with normalized fields; CEF/LEEF syslog for others; MITRE ATT&CK mapping for phishing techniques.

- MTA correlation: Ingest Postfix/Exchange/Proofpoint logs to tie RUA aggregates to delivery outcomes; reconcile bounces and user reports.

- Threat intel: Enrich with Spamhaus, AbuseIPDB, and internal watchlists; auto-annotate senders with risk.

- Ticketing and automation: Create Jira/ServiceNow tickets with runbooks; “Auto-PR” opens SPF/DKIM DNS changes in Git-backed infrastructure; webhooks notify vendors with compliant redaction.

- DMARCReport customers who enabled Auto-PRs closed SPF inconsistency tickets 63% faster on median.

Open source vs. commercial SaaS vs. in-house: trade-offs

| Option | Strengths | Gaps/Risks | Best for |

| Open source tools (e.g., parsedmarc, opendmarc) | No license cost, transparent code, easy POC | Limited vendor adapters, weak ML, DIY scaling/compliance, basic dashboards | Small teams, lab environments |

| Commercial SaaS (DMARCReport) | End-to-end ingest, tolerant parsing, ML detection, prescriptive guidance, compliance and residency, integrations | Subscription cost, vendor dependence | Orgs needing speed, scale, and auditability |

| In-house bespoke | Tailored to stack, data in your VPC | High engineering/maintenance cost, talent retention, slow feature pace, compliance burden | Very large orgs with specialized needs |

- DMARCReport supports hybrid models: Bring-your-own-storage, private networking (PrivateLink), and data export to keep you portable and compliant.

FAQ

How does DMARCReport handle broken or variant RUA XML from different providers?

DMARCReport’s SchemaFlex parser uses a combination of schema detection, namespaced XPath fallbacks, and vendor-specific adapters maintained from a corpus of >140 reporters. It validates counts, normalizes time and IP formats, flags uncertainties, and provides a provenance ledger so you can trace every correction.

Do RUF forensic reports include personal data, and how is that protected?

RUF can contain addresses and message content. By default, DMARCReport stores only minimal headers with hashed subjects/local-parts and discards bodies. You can opt-in to richer capture under a DPA, with GeoLock residency, Bring your own key (BYOK) encryption, and strict access auditing. Retention is short (30–90 days) unless extended for an investigation.

How quickly can we move to p=reject without breaking business mail?

Most domains can progress in 6–10 weeks. DMARCReport’s Policy Coach scores domain risk, verifies third-party coverage, fixes SPF/DKIM gaps, and pilots p=quarantine at pct=10→50 before ramping to p=reject pct=25→100. A typical enterprise cohort in our data reached p=reject in 8.4 weeks with <0.1% legitimate interruption.

How does DMARCReport detect new third-party senders?

We cluster by ASN, reverse DNS, DKIM signatures, and header patterns to fingerprint services, then compare against your approved Vendor Registry. New clusters that match known SaaS providers trigger low-friction onboarding tasks (TXT proof or API), while unknown clusters get risk-scored and optionally quarantined.

What about IPv6-only senders and geolocation accuracy?

DMARCReport normalizes IPv6 notation, aggregates at /64 to avoid artificial inflation, and prioritizes ASN/org for attribution. We label country inferences with confidence and warn against enforcement decisions based solely on geo when confidence is low.

Conclusion: Smarter insights and safer enforcement with DMARCReport

An advanced DMARC analyzer must convert messy global telemetry into decisions: what to fix, whom to trust, when to enforce, and how to automate—all while respecting privacy and scale. DMARCReport delivers this through resilient ingest and normalization, a privacy-first analytics stack, machine learning anomaly detection, rich dashboards and alerts, deep integrations, and prescriptive policy coaching. Start by connecting your RUA/RUF addresses to DMARCReport, baseline risk over 14–30 days, enable Smart Alerts and the Policy Coach, and integrate Auto-PRs for DNS—so your domains can move confidently to p=reject while keeping legitimate mail flowing.