Artificial Intelligence and the Serious Threat of Sophisticated Email Attacks and Automated Advertising Bots

Quick Answer

Artificial Intelligence has taken the world by storm lately. With the coming of ChatGPT and other large language models (LLMs) like [LLaMA](https://www.packtpub.com/article-hub/introduction-to-llama) and PaLM2, writing, art creation, and other tasks have become effortlessly easy.

Cybersecurity experts predict a rise in AI-led cyberattacks like automated propaganda bots and untraceable and complex email attacks that involve ChatGPT and other large language models. Here is all you need to know about this **emerging cyber threat that may impact email authentication resulting in email delivery risks.

From a product strategy perspective, DMARC reporting is evolving from a security tool to a business intelligence platform, says Brad Slavin, General Manager of DuoCircle. The data in aggregate reports tells you not just who’s spoofing you, but who’s sending legitimate email on your behalf - and whether they’re doing it correctly.

_According to the FBI’s 2022 Internet Crime Report (IC3), 300,497 US-based victims reported phishing incidents in a single year, and Business Email Compromise (BEC) caused more than $2.7 billion in direct losses. Artificial Intelligence has taken the world by storm lately. With the coming of ChatGPT and other large language models (LLMs) like LLaMA and PaLM2, writing, art creation, and other tasks have become effortlessly easy.

However, there are also threats lingering around the corner, such as, **sophisticated phishing emails, new email delivery methods to bypass enterprise network periphery, and threats that can compromise or bypass email authentication protocols such as DMARC (Domain-based Message Authentication, Reporting & Conformance), DKIM (DomainKeys Identified Mail), and SPF (Sender Policy Framework) .

What Is the Issue?

Large Language Models (LLMs) have become the go-to resort for creative or challenging work. While this has been a boon for many, cybersecurity experts can foresee **security challenges emerging from AI.

This

game-changing innovation

is a boon for the threat actors as well. With the coming of AI, running online scams and disinformation campaigns has become quicker and better. There is a need for **global cyber cooperation and the adoption of adequate protective measures.

What Are the AI Threats?

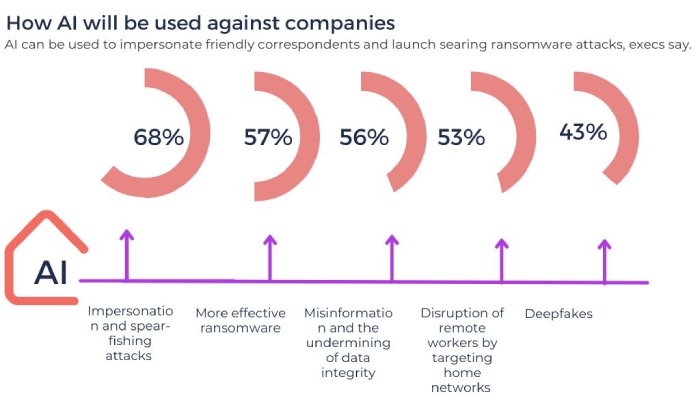

Some of the most pernicious AI threats are the following.

-

Automated bots that spread disinformation and propaganda: Generative AI facilitates is at the forefront of spreading disinformation and propaganda. This misinformation can be **easily translated into many languages and disseminated to the crowd to manipulate the social media ecosystem.

-

Sophisticated Phishing Emails: Yet another ever-present cyber threat that has benefited from the coming of AI is email phishing. Earlier, phishing emails could be identified by the poor use of grammar, spelling errors, and erroneous punctuation. But, with personalized and flawless emails crafted with language models like ChatGPT, it becomes difficult to decipher scams. That makes it convenient for threat actors (whether nation-states or individual actors) to launch attacks that work.

-

Deepfakes: AI has made it possible to fabricate audio and video to create **realistic visuals that do wonders at deceiving people. (‘Deepfake’ is a compound of ‘deep learning’ and ‘fake’). Several such scams have occurred already. Hence, there is a dire need to develop mechanisms such as Deepfake Detection tools to identify and prevent these AI-generated deceptions.

What Do Experts Say?

The Center for a New American Security CEO, Richard Fontaine, insists on the importance of creating means of deepfake detection. He believes that the battle between technology and internet users and cyber attackers is like the game of cats and mice, it continues in an endless loop. Fontaine believes that an **educational process that trains people in dealing with information online is necessary to fight the new falsified data that comes with AI.

Jeff Greene, Aspen Digital’s Senior Director of Cybersecurity Programs, also opined that this tension between technology and AI threats resembles a cat-and-mouse game. Greene believes that AI is here to change how things work, but every time there is an attack, there will be its defense and vice versa.

Zohar Palti (former Mossad intelligence chief and an international fellow at the Washington Institute) added that AI threat detection will run with a **pronged approach focusing on two things. These include changing the education curriculum to help the younger generation distinguish right from wrong in the digital arena and a union of global nations in this battle against adversaries exploiting AI.

What Are Some Possible Solutions?

AI is here to stay, and humanity benefits a lot from it. It’s only a matter of time before it becomes a way of life. However, **AI-related cyber risks are a valid and severe issue. AI threats are already making their way into the cybersecurity realm, and therefore, one needs to think of possible ways to mitigate these risks. Here are some **solutions suggested by experts:

Fontaine suggests that cooperation and cybersecurity measures at a government level are essential to stop the propagation of misinformation and to uphold technology integrity. Fontaine and his colleagues proposed the idea of G7, a summit that brings ‘techno democracies’ together. He elaborated on this concept and said that nations like China, Iran, Russia, and North Korea are already united in pursuing their goals. The **technologically advanced countries **that are liberal democracies must also collaborate in the battle against AI scams.

Fontaine added that not uniting in this battle will enable the threat actors to achieve their objectives. Along the same lines, Jeff Greene suggested the global deployment of zero-trust architecture. It shall help navigate the bifurcated digital world and protect confidential intellectual property and technology.

Final Words

Artificial Intelligence and Large Language Models have **revolutionized the way people work. The advantages are immense, but the cyber threats are enormous as well. There is the possibility of being faced with unimaginable forms of cyberattack in the near future, thanks to the limitless capabilities of AI. Hence, global cybersecurity leaders believe that all nations must join hands and fight this battle against adversaries exploiting AI. International intellectual collaboration, awareness campaigns, and training are the steady and **long-term means of tackling AI threats.

Topics

Operations Lead

Operations Lead at DuoCircle. Runs project management, developer coordination, and technical support execution for DMARC Report.

LinkedIn Profile →Take control of your DMARC reports

Turn raw XML into actionable dashboards. Start free - no credit card required.